Demo 3: Inner-Product Spaces#

Demo by Magnus Troen. Revised March 2026 by shsp.

from sympy import*

from dtumathtools import*

init_printing()

The usual inner product \(\left<\cdot, \cdot \right>\) in an inner-product space \(V\subseteq \mathbb{F}^n\) is given by:

for all \(\boldsymbol{x},\boldsymbol{y} \in V\). See more in the textbook. Sympy does not have a direct inner-product command and we will have to approach it manually for each individual vector space.

Inner Product on \(\Bbb R^n\)#

For \(\Bbb R^n\), the above-mentioned usual inner product simplifies to the well-known dot product. So, we can simply use Sympy’s .dot command in case of real vectors:

x1 = Matrix([1,2,3])

x2 = Matrix([4,5,6])

x1.dot(x2), x2.dot(x1)

A quick manual check with \(\boldsymbol x_1 = (1,2,3)\) and \(\boldsymbol x_2 = (4,5,6)\) agrees:

Note that the order of the vectors in this inner product doesn’t matter.

Inner Product on \(\Bbb C^n\)#

For \(\Bbb C^n\), the above-mentioned inner product definition is not directly a dot product, rather it is a variant of a dot product where the second vector becomes conjugated. Fortunately, all it takes in Sympy is adding the argument conjugate_convention = 'right' to the .dot command:

x3 = Matrix([1, 2])

x4 = Matrix([2-I, 2])

x3.dot(x4, conjugate_convention = 'right')

If this feels too long to type out, then feel free to make your own script such as this one:

def inner(x: Matrix, y: Matrix):

'''

Computes the inner product of two vectors of same size

'''

return x.dot(y, conjugate_convention = 'right')

MutableDenseMatrix.inner = inner

ImmutableDenseMatrix.inner = inner

Instead of x3.dot(x4, conjugate_convention = 'right') you can now simply type x3.inner(x4) or inner(x3,x4):

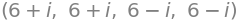

x3.inner(x4), inner(x3,x4), x4.inner(x3), inner(x4,x3)

Note how the order of vectors does matter in this case, as expected. A quick manual check with the two complex vectors \(\boldsymbol x_3 = (1,2)\) and \(\boldsymbol x_4 = (2 - i,2)\) agrees:

Note that our home-made Python function inner also is usable for \(\Bbb R^n\), since it simplifies to the dot product on real vectors where conjugation has no influence.

Norm#

The textbook defines the norm as \(\Vert \boldsymbol{x} \Vert = \sqrt{\left<\boldsymbol{x}, \boldsymbol{x} \right>}\), which with the usual inner product on vectors simply becomes the Pythagorean Theorem. For real vectors in particular, the norm is of course typically thought of as vector length.

Instead of manually typing out the Pythagorean theorem, Sympy has the norm command for us to use:

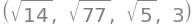

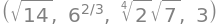

x1.norm(), x2.norm(), x3.norm(), x4.norm()

Note that this version of the norm also is known as the 2-norm (or the Euclidean norm or the \(\ell^2\)-norm), denoted by \(||\cdot||_2\) if that has to be clarified. You can compute other norms as follows - here \(||\boldsymbol x_1||_2\), \(||\boldsymbol x_1||_3\), \(||\boldsymbol x_1||_4\), and \(||\boldsymbol x_1||_\infty\) - but we won’t dive more into these in this course:

x1.norm(2), x1.norm(3), x1.norm(4), x1.norm(oo)

If you prefer, the general norm formula \(\Vert \boldsymbol{x} \Vert = \sqrt{\left<\boldsymbol{x}, \boldsymbol{x} \right>}\) is easily typed out in code manually:

sqrt(x4.inner(x4)).simplify() , sqrt(x1.dot(x1)).simplify()

But be careful to use the correct inner product calculation (if the norm is not a real number, then a wrong definition has been used):

sqrt(x4.inner(x4)).simplify() , sqrt(x4.dot(x4)).simplify()

Projections onto a Line#

The Projection Formula#

The textbook explains how the projection of a vector \(\boldsymbol{x} \in \mathbb{F}^n\) onto a line \(Y = \operatorname{span}\{\boldsymbol{y}\}\) spanned by a vector \(\boldsymbol{y} \in \mathbb{F}^n\) can be computed as

where \(\boldsymbol{u} = \frac{\boldsymbol{y}}{||\boldsymbol{y}||}\).

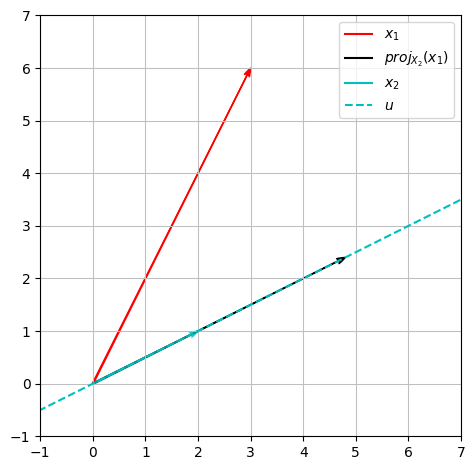

As an example, let \(\boldsymbol{x}_1, \boldsymbol{x}_2 \in \mathbb{R}^2\) be given by:

Let us project \(\boldsymbol{x}_1\) onto the line given by \(U = \operatorname{span}\{\boldsymbol{x}_2\}\):

x1 = Matrix([3,6])

x2 = Matrix([2,1])

projU_x1 = x1.inner(x2)/x2.inner(x2) * x2 # Alternatively: inner(x1,x2)/x2.norm()**2 * x2

projU_x1

Since we are working in \(\mathbb{R}^2\), this can be illustrated as follows:

x = symbols('x')

plot_x1 = dtuplot.quiver((0,0),x1,rendering_kw={'color':'r', 'label': '$x_1$'}, xlim = (-1,7), ylim = (-1,7), show = False, aspect='equal')

plot_x2 = dtuplot.quiver((0,0),x2,rendering_kw={'color':'c', 'label': '$x_2$', 'alpha': 0.7}, show = False)

plot_projX2 = dtuplot.quiver((0,0),projU_x1,rendering_kw={'color':'k', 'label': '$proj_{X_2}(x_1)$'},show = False)

plot_X2 = dtuplot.plot(x2[1]/x2[0] * x, label = '$u$',rendering_kw={'color':'c', 'linestyle': '--'}, legend = True,show = False)

(plot_x1 + plot_X2 + plot_projX2 + plot_x2).show()

The Projection Matrix#

As an example, let us define some vectors in \(\Bbb C^4\):

c1 = Matrix([2+I, 3, 5-I, 6])

c2 = Matrix([1, I, 3-I, 2])

c1,c2

The textbook explains how the projection \(\operatorname{Proj}_{\boldsymbol c_2}\), projecting input vectors onto the line spanned by \(\boldsymbol c_2\), can be described as the linear map:

where \(\boldsymbol{u} = \boldsymbol c_2/||\boldsymbol c_2||\). The mapping matrix \(P=\boldsymbol{u}\boldsymbol{u}^*\) is typically called a projection matrix. Note that the asterisk \(^*\) denotes the adjoint, meaning the conjugate transpose, vektor/matrix, \(\boldsymbol u^*=\boldsymbol{\bar u}^T\).

u = simplify(c2/c2.norm())

P = expand(u*u.adjoint())

u,P

With this matrix it is easy to do projections of vectors onto the line spanned by \(\boldsymbol c_2\), e.g. a projection of \(\boldsymbol c_1\), that is \(\operatorname{Proj}_{\boldsymbol c_2}(\boldsymbol c_1)\):

simplify(P*c1)

A comparison with the previous method agrees:

simplify(c1.inner(u)/u.inner(u)*u)

The projection of \(\boldsymbol c_2\) onto the line spanned by \(\boldsymbol c_2\), so \(\operatorname{Proj}_{\boldsymbol c_2}(\boldsymbol c_2)\), should of course not change the vector \(\boldsymbol c_2\) - let’s check:

P*c2, c2

At first, this doesn’t look promising, but don’t forget to simplify:

simplify(P*c2), c2

As expected. The projection matrix \(P\) is per definition Hermitian, meaning \(P = P^*\). This can be verified manually:

simplify(P-P.adjoint())

or with a dedicated command:

P.is_hermitian

True

Orthonormal Bases#

The textbook defines a set of vectors as orthonormal when all vectors in the set have a norm of \(1\) and they all are mutually orthogonal, meaning with inner products of \(0\).

Orthonormal bases show to be incredibly practical. One example is change of basis, which is way easier when using orthonormal bases. If we, for example, are given an orthonormal basis \(\beta=(\boldsymbol{u}_1,\boldsymbol{u}_2,\cdots,\boldsymbol{u}_n)\) for a vector space \(V\), then, according to the textbook, a coordinate vector \(\phantom{ }_\beta\boldsymbol{x}\) with respect to basis \(\beta\) of a vector \(\boldsymbol x\in V\) can be found as:

(Compare this with Mathematics 1a, where we were to solve linear equation systems in order to find coordinate vectors.)

Manual Check of Orthonormality#

As an example, we are given the following list of three vectors in \(\Bbb R^3\):

where \(\boldsymbol q_3\) is given as the cross product of the first two.

q1 = Matrix([sqrt(3)/3, sqrt(3)/3, sqrt(3)/3])

q2 = Matrix([sqrt(2)/2, 0, -sqrt(2)/2])

q3 = q1.cross(q2)

q1,q2,q3

It is claimed that these form an orthonormal basis for \(\mathbb{R}^3\). Let us check that claim by manually checking each inner product combination:

q1.inner(q1), q1.inner(q2), q1.inner(q3), q2.inner(q2), q2.inner(q3), q3.inner(q3)

The requirements are fulfilled, so we have confirmed that \(\beta\) consists of three orthonormal vectors. Orthonormal vectors are linearly independent, and three linearly independent vectors in \(\Bbb R^3\) span \(\Bbb R^3\). Hence we conclude that \(\beta\) forms an orthonormal basis for \(\mathbb{R}^3\).

Now we can write the coordinate vector of a vector such as for example \(\boldsymbol{x} = (1,2,3)\) with respect to the \(\beta\) basis:

x = Matrix([1,2,3])

beta_x = Matrix([x.inner(q1) , x.inner(q2) , x.inner(q3)])

beta_x

Check of Orthonormality using Rank#

As another example, consider the list of vectors \(\gamma = (\boldsymbol v_1,\boldsymbol v_2,\boldsymbol v_3,\boldsymbol v_4)\), all from \(\mathbb{C}^4\), where

v1 = Matrix([2*I,0,0, 0])

v2 = Matrix([I, 1, 1, 0])

v3 = Matrix([0, I, 1, 1])

v4 = Matrix([0, 0, 0, I])

We wish to form an orthonormal basis of the vector space spanned by \(\gamma\). We can first try to check whether the vectors in \(\gamma\) span all of \(\Bbb C^4\) or just a subspace within it:

V = Matrix.hstack(v1,v2,v3,v4)

V.rref(pivots=False)

With a rank of four, we have confirmed that the vectors in \(\gamma\) are linearly independent, and since there are four of them we conclude that \(\gamma\) spans and constitutes a basis for \(\Bbb C^4\). But not necessarily an orthonormal basis. You can check for orthogonality and unit length first, and you’ll see that they need adjustment. The Gram-Schmidt procedure will fix this for us!

The Gram-Schmidt Procedure#

We will now carry out the Gram-Schmidt procedure on the example of four vectors \(\boldsymbol v_1,\boldsymbol v_2,\boldsymbol v_3,\boldsymbol v_4\) in \(\Bbb C^4\) from the previous section. That is, we will be “adjusting” the four vectors such that we obtain four new orthonormal vectors that span the same vector space \(\Bbb C^4\).

According to the textbook, following the Gram-Schmidt procedure means first normalizating \(\boldsymbol{v}_1\) in order to obtain the first new vector \(\boldsymbol{u}_1\):

u1 = v1/v1.norm()

u1

after which the remaining new vectors are found one by one with the calculation:

each finished with a normalization:

w2 = simplify(v2 - v2.inner(u1)*u1)

u2 = expand(w2/w2.norm())

w3 = simplify(v3 - v3.inner(u1)*u1 - v3.inner(u2)*u2)

u3 = expand(w3/w3.norm())

w4 = simplify(v4 - v4.inner(u1)*u1 - v4.inner(u2)*u2 - v4.inner(u3)*u3)

u4 = expand(w4/w4.norm())

u1,u2,u3,u4

We should now check to confirm that \(\boldsymbol{u}_1,\boldsymbol{u}_2,\boldsymbol{u}_3,\boldsymbol{u}_4\) indeed are orthonormal - we will do one such check here and leave the rest for the reader:

simplify(u1.inner(u2)) # And so on

The Gram-Schmidt procedure can be time-consuming for large vector sets, but fortunately Sympy has the command GramSchmidt that let’s us find the result much quicker:

y1,y2,y3,y4 = GramSchmidt([v1,v2,v3,v4], orthonormal=True)

y1,y2,expand(y3),expand(y4)

Unitary and (Real) Orthogonal Matrices#

According to the textbook, a square matrix \(U\) is called unitary if it satisfies

For a real matrix \(Q\in \mathsf M_n(\mathbb{R})\), the adjoint \(Q^*=\bar Q^T\) simplifies to just the transpose \(Q^T\), and real square matrices are called (real) orthogonal, if they satisfy

Note that (real) orthogonal matrices also are unitary.

The textbook follows up with proving that a matrix is unitary, respectively (real) orthogonal, if and only if its columns are orthonormal. A matrix \(U = \left[\boldsymbol{u}_1, \boldsymbol{u}_2, \boldsymbol{u}_3, \boldsymbol{u}_4\right]\) formed from the vectors we obtained via the Gram-Schmidt procedure earlier, will thus be a unitary matrix:

U = Matrix.hstack(u1,u2,u3,u4)

U

The adjoint matrix \(U^*\) of \(U\) is:

U.adjoint(), conjugate(U.T)

That \(U\) is indeed unitary can be verified by:

simplify(U*U.adjoint()), simplify(U.adjoint()*U)

A matrix \(Q = \left[\boldsymbol{q}_1, \boldsymbol{q}_2, \boldsymbol{q}_3\right]\) formed from the real \(\boldsymbol q\) vectors that we confirmed to be orthonormal earlier, is (real) orthogonal:

Q = Matrix.hstack(q1,q2,q3)

Q

That \(Q\) is indeed (real) orthogonal can be verified by:

simplify(Q*Q.T), simplify(Q.T*Q)